The use of artificial intelligence (AI) platform ChatGPT could raise some moral dilemmas for eye health professionals, but attempts to regulate it may be difficult, due to the evolving nature of the technology, an Adelaide ophthalmologist has said.

The use of artificial intelligence (AI) platform ChatGPT could raise some moral dilemmas for eye health professionals, but attempts to regulate it may be difficult, due to the evolving nature of the technology, an Adelaide ophthalmologist has said.

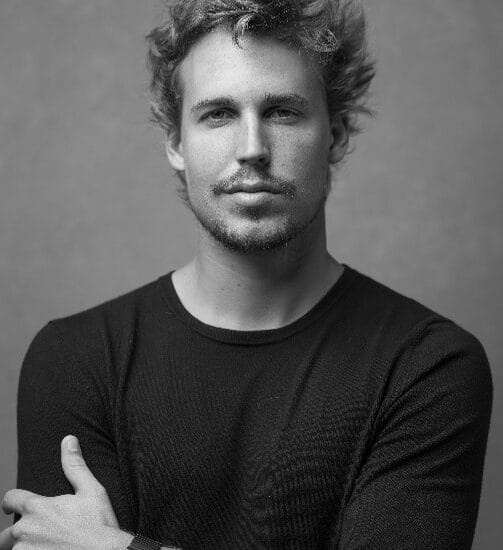

Dr Ben LaHood said ChatGPT is a “natural extension” of ophthalmic AI programs, which have been trained to detect diseases such as diabetic retinopathy, glaucoma, and age-related macular degeneration. “AI is the way of the future.

AI will be better than us in doing a lot of the tasks that we commonly do. ChatGPT is a little bit different in that it is not just looking at and analysing data, but actually creating content. So, it does feel a little more alive,” Dr LaHood told mivision.

WHAT IS CHATGPT?

ChatGPT is large language model tool developed by Open AI.1 Through a free user interface, you can ask ChatGPT to write emails, generate code, and produce stories or poems. It does so in a dialogue format, which makes it possible for ChatGPT to answer follow-up questions, challenge incorrect premises, and reject inappropriate requests.

Open AI admits ChatGPT “sometimes writes plausible-sounding but incorrect or nonsensical answers”.1

In the United States, Dr Jeremy Faust, from online news platform MedPage Today claimed ChatGPT “lied” by fabricating references. He asked the platform to diagnose a patient on certain parameters. Dr Faust said while the initial diagnosis was impressive, he asked for a reference to back up the conclusion.

“It took a real journal, the European Journal of Internal Medicine. It took the last names and first names, I think, of authors who have published in said journal. And it confabulated out of thin air a study that would apparently support this viewpoint,” Dr Faust wrote.2

Concerns like these about the accuracy of ChatGPT’s responses and the potential of the platform for cheating have led to bans on the platform.

Most Australian states have blocked access to the website in schools, while universities have updated academic integrity policies to specifically mention AI content as a form of cheating. Bot-generated content is also being banned by publishers of scientific journals across the globe.

USING AI IN EYE HEALTH

Dr LaHood said he would “definitely hope” ChatGPT and similar programs wouldn’t be solely relied upon in their current form by eye health professionals for diagnosis and treatment “from a moral perspective” as we still have a lot to learn about their capabilities and shortcomings.

“A simple Google search of any health condition will provide extremes of opinion and management. This is the material being scraped from the internet for ChatGPT to work with. Do we need guidelines? I would like to think we don’t for the actual medical side of treating people and making decisions,” Dr LaHood said.

“For the academic side of things, I definitely predict that it will be abused… it would be wonderful to say to a program, ‘put together an article based around my data’.

“But the idea of restricting what’s able to be used is inherently flawed, it is going to improve to get around that. ChatGPT and similar programs will work out how to… make their content look as though it has been personally created by an individual.

“I do think it is going to be where the majority of written content is going to originate from in the future and we won’t be able to limit or even identify it for long. This technology will undoubtedly continue to evolve and improve as it has in just a few months already. Currently, my concern about using it for creating written content is that this new content is put online and creates a cycle of material being scraped and reworded over and over until it all becomes very similar. I liken it to mixing together different paint colours until you are left with one tone of brown, and all individual features are lost.”

Dr LaHood said he can see immediate uses for the platform for creating online content, standard brochures, or patient materials, “things that are time consuming and fairly generic”.

Even though the content would need to be checked for errors, the accuracy of the platform will no doubt improve over time, he concluded.